Ngày 02/04 vừa qua, hội thảo trực tuyến do Gimasys phối hợp cùng Google Cloud…

How to simplify building RAG pipelines in BigQuery with Document AI Layout Parser

Document preprocessing is a common stumbling block when building retrieval-augmented generation (RAG) pipelines. It often requires python skills and external libraries to parse documents like PDFs into manageable chunks that can be used to generate embeddings. In this article, we’ll help you get the most value out of your massive document repositories and explore how Google can help you transform complex documents into structured data that’s ready to feed AI models.

Streamline document processing in BigQuery

BigQuery now provides document preprocessing capabilities for Rag pipelines and other document-centric applications through tight integration with Document AI. The ML.PROCESS_DOCUMENT function can help you access new processors, including Document AI’s Layout Parser processor, which lets you parse PDF documents with SQL syntax..

The GA of ML.PROCESS_DOCUMENT provides developers with new benefits:

- Improved scalability: The ability to handle larger documents up to 100 pages and process them faster

- Simplified syntax: Streamlined SQL syntax to interact with Document AI’s Layout Parser processor

- Additional documents: Access additional documentation with AI processor capabilities such as Layout parsers, to generate the document blocks needed for RAG pipelines

In particular, supplementary documentation is a critical – yet challenging – component of building a Rag pipeline. An AI document parser will help simplify this process.

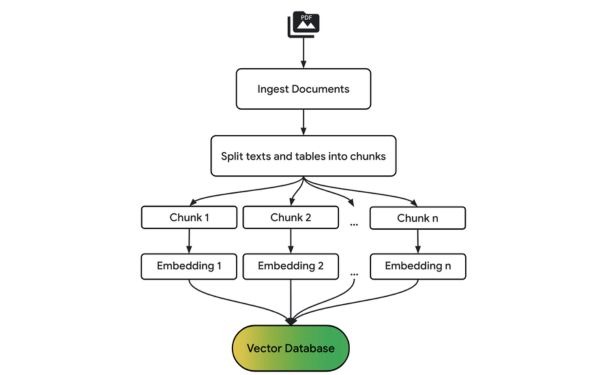

Document preprocessing for RAG

Breaking down large documents into smaller, semantically related units improves the relevance of the retrieved information, leading to more accurate answers from a large language model (LLM).

Generating metadata such as document source, chunk location, and structural information alongside chunks can further enhance your RAG pipeline, making it possible to filter, refine your search results, and debug your code.

The following diagram provides a high-level overview of the preprocessing steps in a basic RAG pipeline:

Build a RAG pipeline in BigQuery

Comparing financial documents like earnings statements can be challenging due to their complex structure and mix of text, figures, and tables. Let’s demonstrate how to build a RAG pipeline in BigQuery using Document AI's Layout Parser to analyze the Federal Reserve’s 2023 Survey of Consumer Finances (SCF) report. Feel free to follow along in the notebook here. Survey of Consumer Finances (SCF) 2023 report of the US Federal Reserve.

Dense financial documents like the Federal Reserve’s SCF report present significant challenges for traditional parsing techniques. This document spans nearly 60 pages and contains a mix of text, detailed tables, and embedded charts, making it difficult to reliably extract information. Document AI’s Layout Parser excels in these scenarios, effectively identifying and extracting key information from complex document layouts such as these.

Building a BigQuery RAG pipeline with Document AI’s Layout Parser consists of the following broad steps.

Create a Layout Parser processor

In Document AI, create a new processor with the type LAYOUT_PARSER_PROCESSOR. Then create a remote model in BigQuery that points to this processor, allowing BigQuery to access and process the documents.

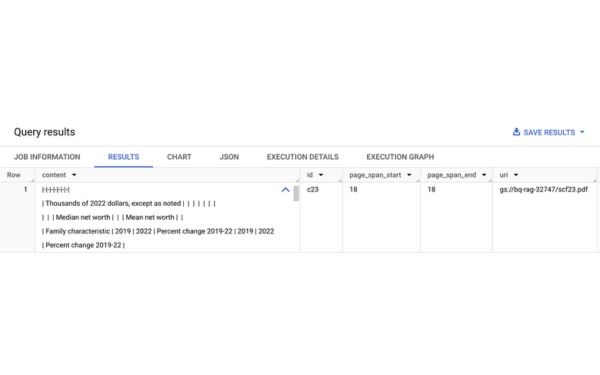

Call the processor to create chunks

To access PDFs in Google Cloud Storage, begin by creating an object table over the bucket with the earnings statements. Then, use the ML.PROCESS_DOCUMENT function to pass the objects through to Document AI and return results in BigQuery. Document AI analyzes the document and chunks the PDF. The results are returned as JSON objects and can easily be parsed to extract metadata like source URI and page number.

SELECT * FROM ML.PROCESS_DOCUMENT(

MODEL docai_demo.layout_parser,

TABLE docai_demo.demo,

PROCESS_OPTIONS => (

JSON ‘{“layout_config”: {“chunking_config”: {“chunk_size”: 300}}}’)

);

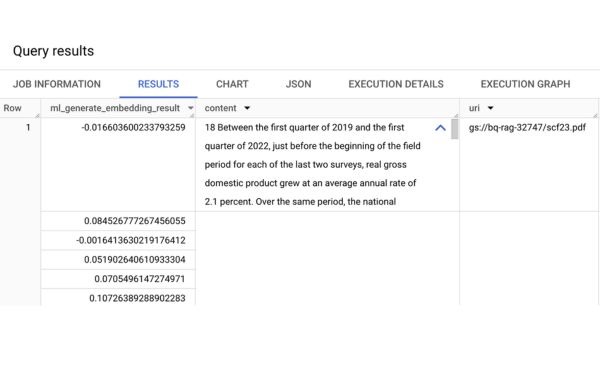

Create vector embeddings for the chunks

To enable semantic search and retrieval, we’ll generate embeddings for each document chunk using the ML.GENERATE_EMBEDDING function and write them to a BigQuery table. This function takes two arguments:

- A remote model, which calls a Vertex AI embedding endpoints

- A column from a BigQuery table that contains data for embedding

Create a vector index on the embeddings

To efficiently search through large chunks based on semantic similarity, we’ll create a vector index on the embeddings. Without a vector index, performing a search requires comparing each query embedding to every embedding in your dataset, which is computationally expensive and slow when dealing with a large number of chunks. Vector indexes use techniques like approximate nearest neighbor search to speed up this process.

CREATE VECTOR INDEX my_index

ON docai_demo.embeddings(ml_generate_embedding_result)

OPTIONS(index_type = “TREE_AH”,

distance_type = “EUCLIDIAN”

);

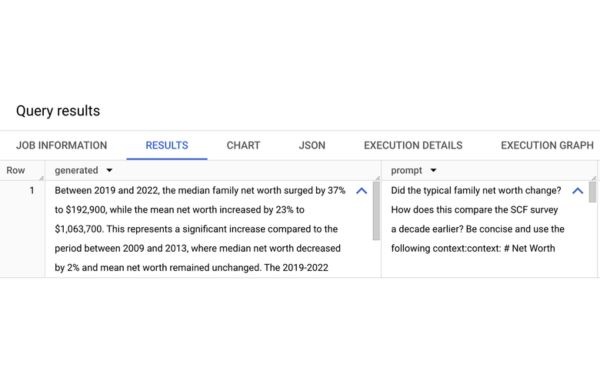

Retrieve relevant chunks and send to LLM for answer generation

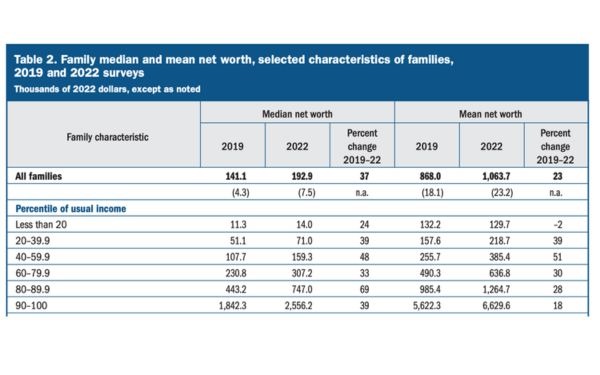

Now we can perform a vector search to find chunks that are semantically similar to our input query. In this case, we ask how typical family net worth changed in the three years this report covers.

SELECT

ml_generate_text_llm_result AS generated,

prompt

FROM

ML.GENERATE_TEXT( MODEL `docai_demo.gemini_flash`,

(

SELECT

CONCAT( ‘Did the typical family net worth change? How does this compare the SCF survey a decade earlier? Be concise and use the following context:’,

STRING_AGG(FORMAT(“context: %s and reference: %s”, base.content, base.uri), ‘,\n’)) AS prompt,

FROM

VECTOR_SEARCH( TABLE

`docai_demo.embeddings`,

‘ml_generate_embedding_result’,

(

SELECT

ml_generate_embedding_result,

content AS query

FROM

ML.GENERATE_EMBEDDING( MODEL `docai_demo.embedding_model`,

(

SELECT

‘Did the typical family net worth increase? How does this compare the SCF survey a decade earlier?’ AS content

)

)

),

top_k => 10,

OPTIONS => ‘{“fraction_lists_to_search”: 0.01}’)

),

STRUCT(512 AS max_output_tokens, TRUE AS flatten_json_output)

);

The retrieved chunks are then sent through the ML.GENERATE_TEXT hàm này gọi điểm cuối của Gemini 1.5 Flash và tạo ra câu trả lời ngắn gọn cho câu hỏi của Google..

And we get an answer: median family net worth increased 37% from 2019 to 2022, which is a significant increase compared to the period a decade earlier, which noted a 2% decrease. Notice that if you check the original document, this information is within text, tables, and footnotes — traditionally areas that are tricky to parse and infer results together!

This example demonstrated a basic RAG flow, but real-world applications often require continuous updates. Imagine a scenario where new financial reports are added daily to a Cloud Storage bucket. To keep your RAG pipeline up-to-date, consider using BigQuery Workflows or Cloud Composer to incrementally process new documents and generate embeddings in BigQuery. Vector indexes are automatically refreshed when the underlying data changes, ensuring that you always query the most current information.

Get started with document parsing in BigQuery

The integration of Document AI's Layout Parser with BigQuery makes it easier for developers to build powerful RAG pipelines. By leveraging ML.PROCESS_DOCUMENT and other BigQuery machine learning functions, you can streamline document preprocessing, generate embeddings, and perform semantic search — all within BigQuery using SQL. Contact Gimasys today to discover more about this solution!