Trong thời đại của ngành thương mại và dịch vụ số, để có được lợi…

Maximizing Reliability, Minimizing Costs: Right-Sizing Kubernetes Workloads

Do you know how much money you could save by adjusting workload requests to better represent their actual usage? If you're not rightsizing your workloads, you might be overpaying for resources that your workloads aren't even using or worse, putting your workloads at risk for reliability issues due to under provisioning.

As we discussed beforesetting the resources is the most important thing you can do to increase the reliability of your Kubernetes workloads. In this blog we will help you with the second key finding from the report: State of Kubernetes Cost Optimization!

“The research … found that workload rightsizing has the biggest opportunity to reduce resource waste.”

“The research … found that workload rightsizing has the biggest opportunity to reduce resource waste.”

State of Kubernetes Cost Optimization Report

According to the results of Google research, workload rightsizing is the most important golden signal. Workload rightsizing measures the capacity of developers to properly use the CPU and memory they have requested for their applications.

Rightsizing is challenging

It can be quite difficult to predict the resource needs of your applications, which historically has not been a concern for developers in traditional data center environments.In traditional data center environments, resources were typically over-provisioned upfront to ensure capacity for peak demand and future growth, so developers didn't need to focus on accurately predicting resource needs as they were covered by the excess capacity, whereas in cloud environments, resources are consumed on-demand. Finding a balance between efficiency and reliability can often feel like a delicate balancing act.

Tools for workload rightsizing

Có các tools trong Cloud Monitoring và Giao diện người dùng GKE mà bạn có thể sử dụng để định lượng workloads của mình chạy trên GKE Google Kubernetes Engine.

Rightsizing in the console

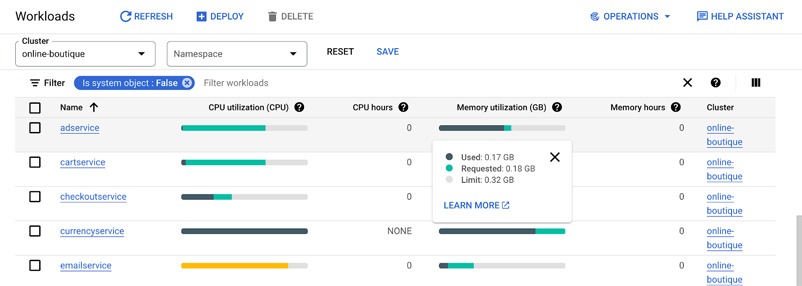

Workload Cost Optimization tab helps you identify workloads that can be optimized by displaying the resources used versus what’s requested.

To take advantage of potential cost savings, you can drill into clusters to see workload level resource recommendations.

To take advantage of potential cost savings, you can drill into clusters to see workload level resource recommendations.

To view workload resource recommendations for Deployment objects only

- In GKE Cost Optimization.

- Select a cluster.

- Click Workloads > Cost Optimization.

- Choose a Deployment workloads

- In the Workload's detail page, select Actions > Scale > Edit Resource Requests

Rightsizing with Cloud Monitoring

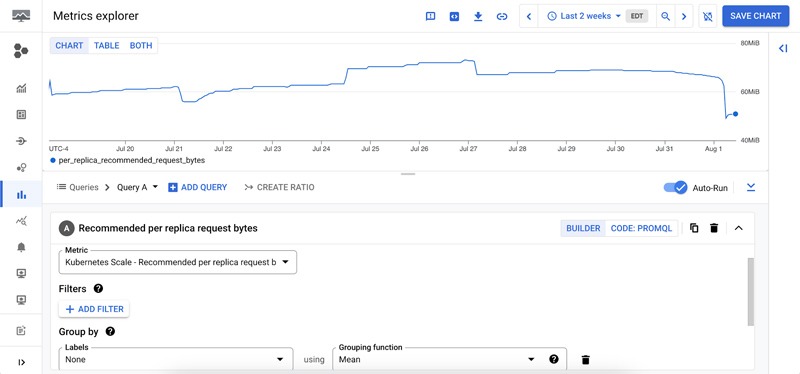

Cloud Monitoring provides built-in VPA scale recommendations metrics that you can use to monitor the performance of your workloads and to identify opportunities to rightsize them without the need to create VPA objects.

To view these metrics:

To view these metrics:

- Go to Cloud Monitoring > Metric Explore console.

- In the Metric dropdown, select the metrics:

- Memory recommendation:

Kubernetes Scale > autoscaler > Recommended per replica request bytes

- Recommended CPU:

Kubernetes Scale > autoscaler > Recommended per replica request cores

Rightsizing at scale

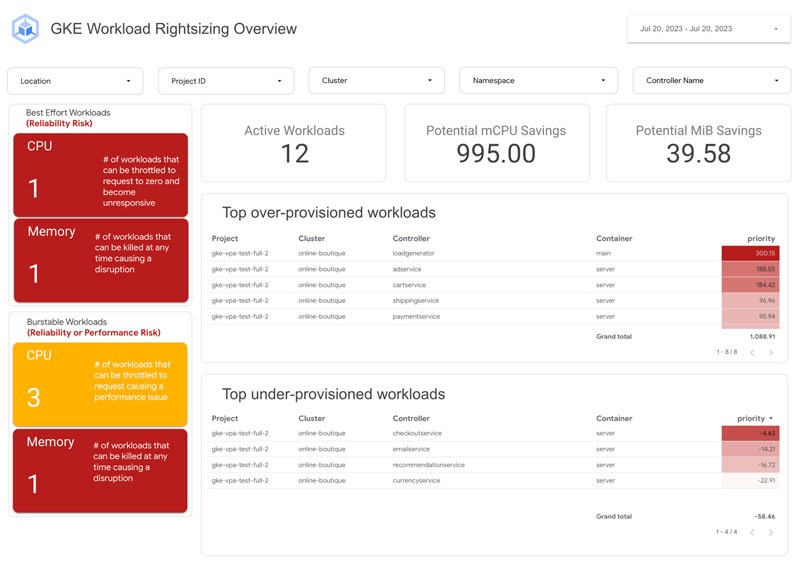

If you’re interested in viewing recommendations across clusters and projects, We've created a guide that you can use today to help you right-size your GKE workloads at scale. This solution leverages your actual cluster’s metric data and built-in workload recommendations provided by Cloud Monitoring. You can determine the resource requirements for all your workloads without having to create additional VPA autoscaler objects in each of your clusters. The guide walks you through deploying the solution.

In conclusion

In short, sizing your workloads is essential for both cost savings and reliability. By following the tips in this blog, you can ensure that your workloads are using the right amount of resources, which will save you money and increase the reliability of your workloads.

Links to the solution presented in this blog and other useful tools to help you optimize your cluster are listed below:

- The Right-sizing workloads at scale solution guide

- Setting resource requests: the key to Kubernetes cost optimization

- The simple kube-requests-checker tool

- An interactive tutorial to get set up in GKE with a set of sample workloads

Download report State of Kubernetes Optimization, review key solutions and follow Gimasys & Google's next blog post!